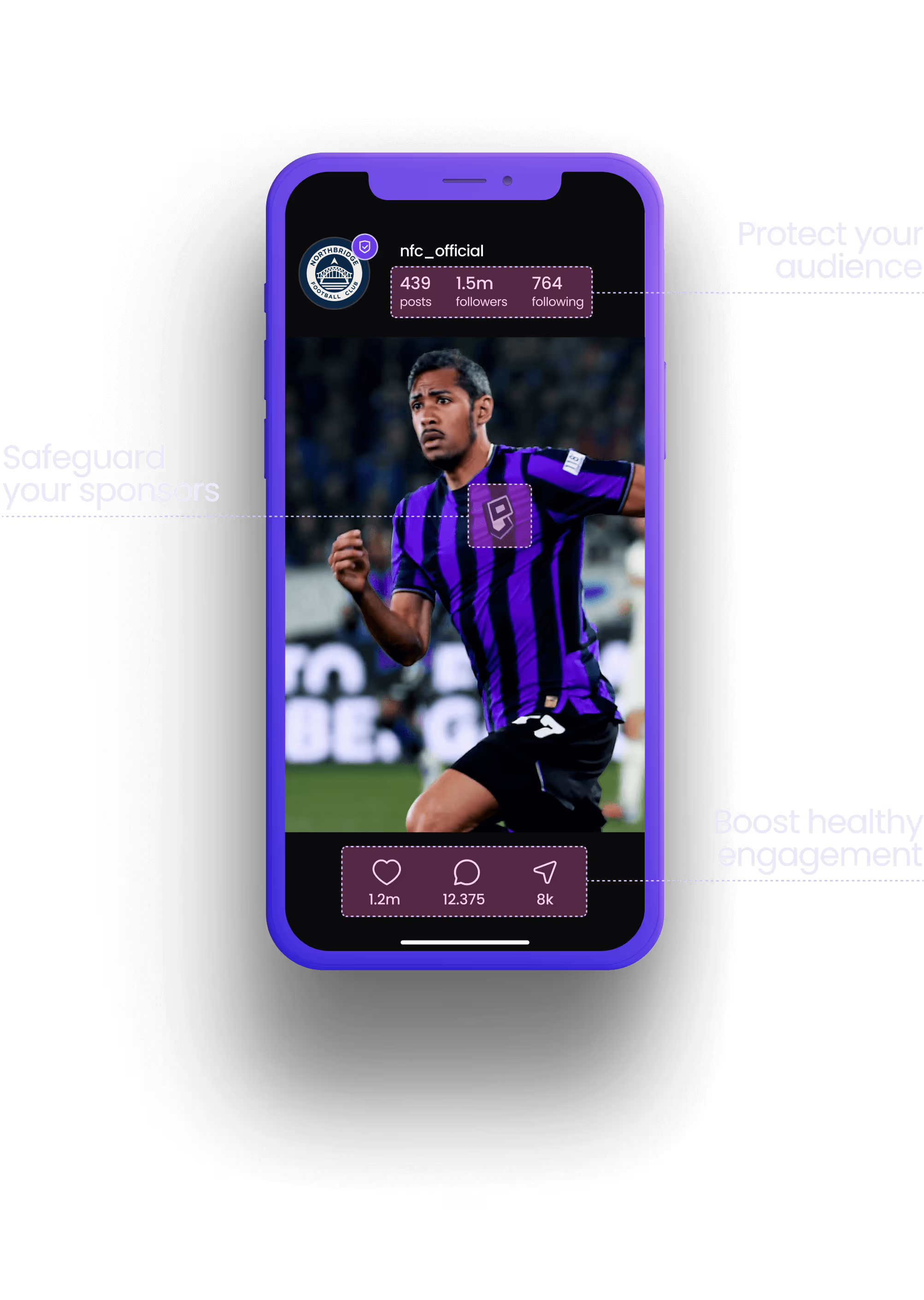

Your socials should be safe from abuse, spam and toxicity. Protect your profile, people (and profits) with Freedom2hear - your AI content filter with real human EQ.

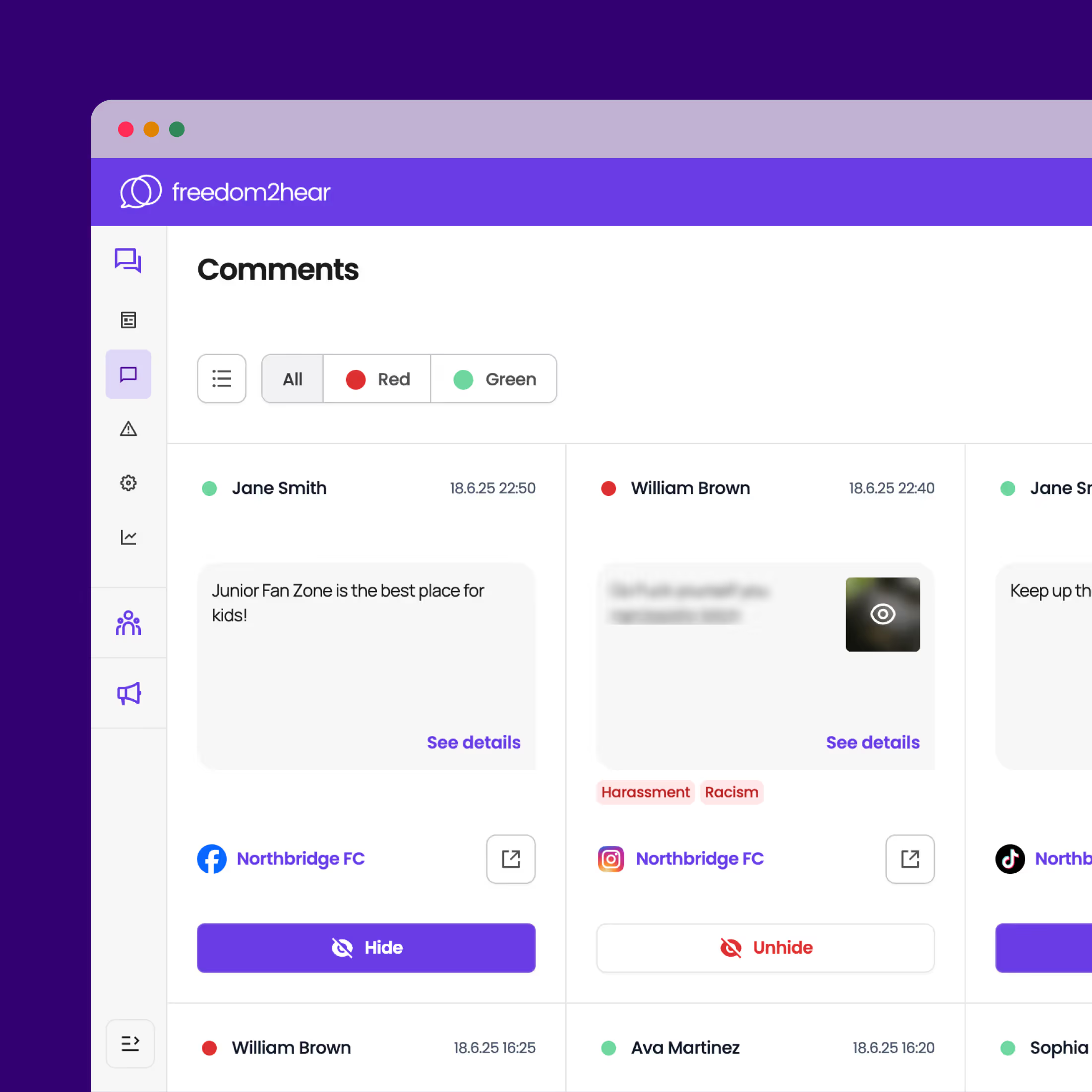

You’re working hard to build your brand online, so imagine a world where you don’t worry about manually checking for toxic comments that can damage your social content or upset your online community. Instead, you focus fully on growing engagement, staying compliant and driving digital success.

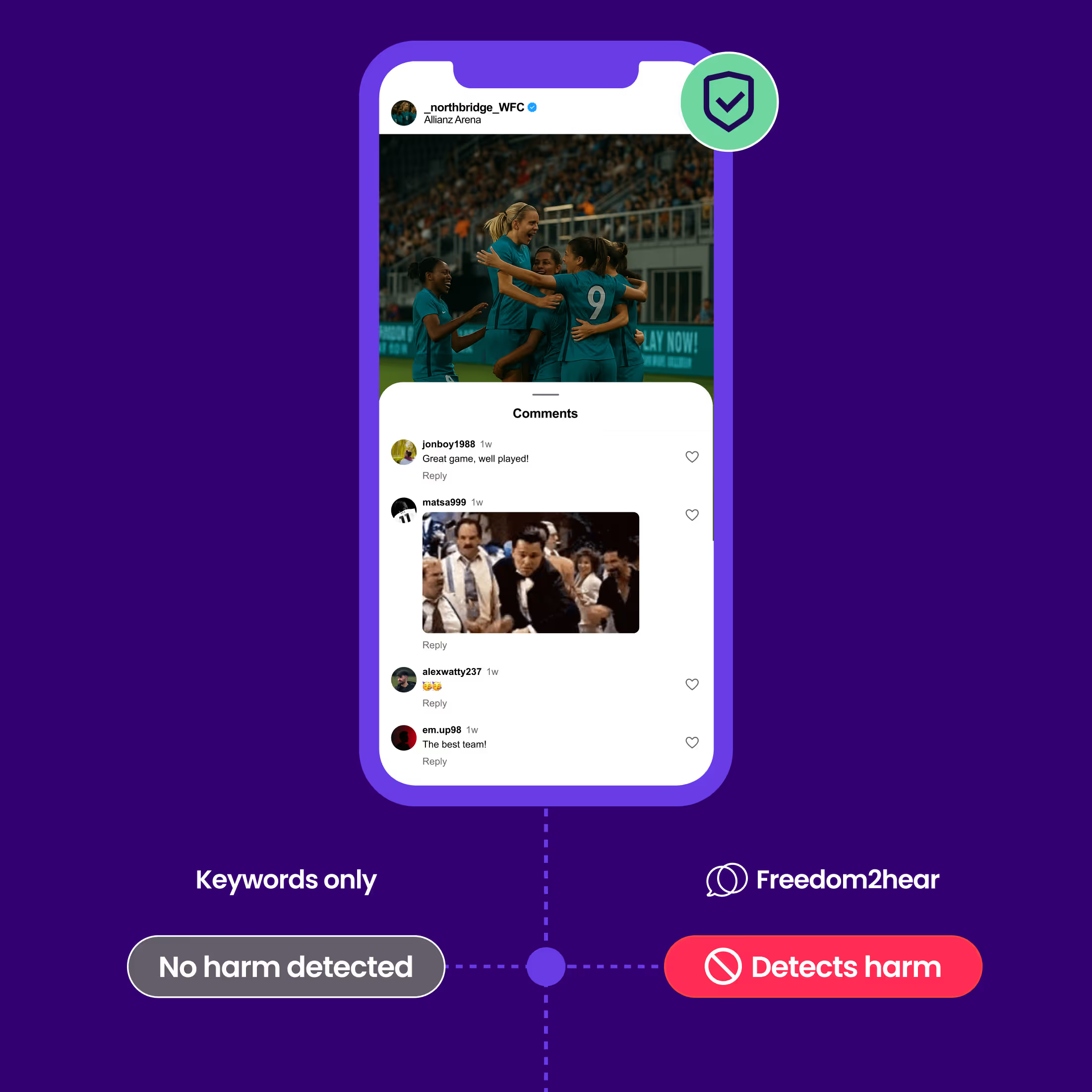

Unlike keyword-based products, Freedom2hear is AI with emotional intelligence - we understand more behind that comment or that link. Our AI, and human experts, go deeper so that you don’t have to spend time deciding on what’s harmful and you’re always protected online.

We understand what’s happening online. We stay on top of trends, whether that be harmful emojis, algoslang or deeper contextual meaning behind the comments. Our AI combined with our world-class team ensures nothing harmful goes live on your platforms.

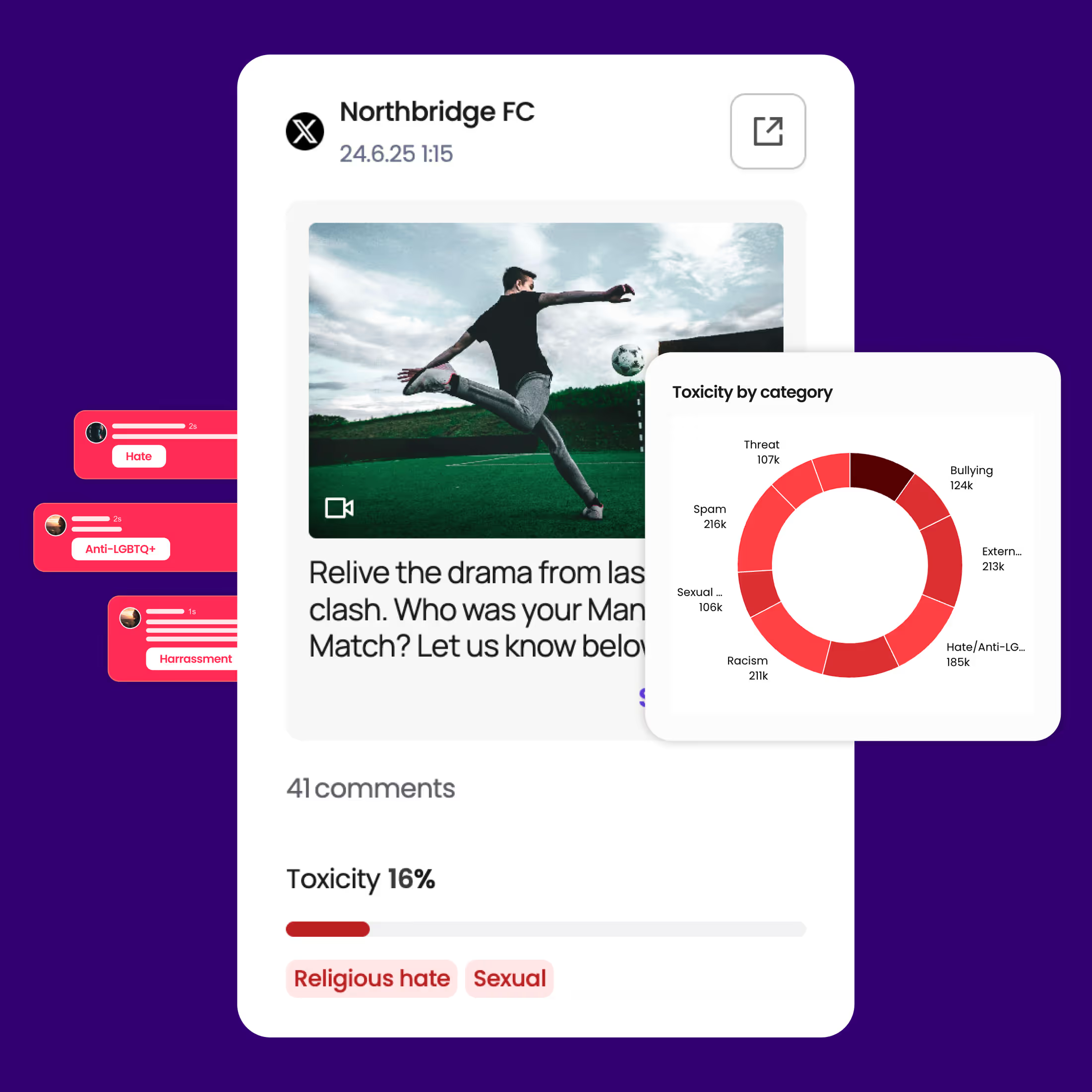

We use a combination of 12 AI filters, a hate speech blacklist and detailed online emotional analysis to better capture and filter online toxicity in the blink of an eye, as it happens.

Our easy-to-use dashboard links to your social channels, communities and apps to automatically detect and mute toxicity, spot trends and get detailed insights in real-time - so you’re always in the know of what’s happening on your socials.

Traditional tools just see keywords and language, which can stifle real-life conversation – Freedom2hear understands the context behind the conversation.

“Safety online shouldn’t be optional. It’s about protecting people and helping brands build trust”

Freedom2hear is your digital defence system that protects you across two different products.