Solution

Don’t let scammers and trolls ruin the creativity and community you’re building on your social channels. Our patented AI defence system allows you to balance engagement and expression while protecting against harm online.

Give your team Freedom2hear.

Find out how it works below.

Our solution integrates seamlessly into leading social and communication platforms.

We ensure your onboarding and integration process is seamless and our expert team is on-hand, whenever you need support - ensuring you have full control of what is moderated and why.

Our best-in-class AI insights, supported by real human moderators, act in real time, leaving you to focus on creativity, community and content - helping you build genuine, positive engagement.

Other solutions just filter keywords, which still leaves you at risk.

With the most up-to-date knowledge of nuance, trends and codes, Freedom2hear’s AI technology analyses emotions with contextual human understanding.

Freedom2hear equips you with powerful tools to moderate and manage online interactions effectively, ensuring safer, healthier digital spaces for everyone.

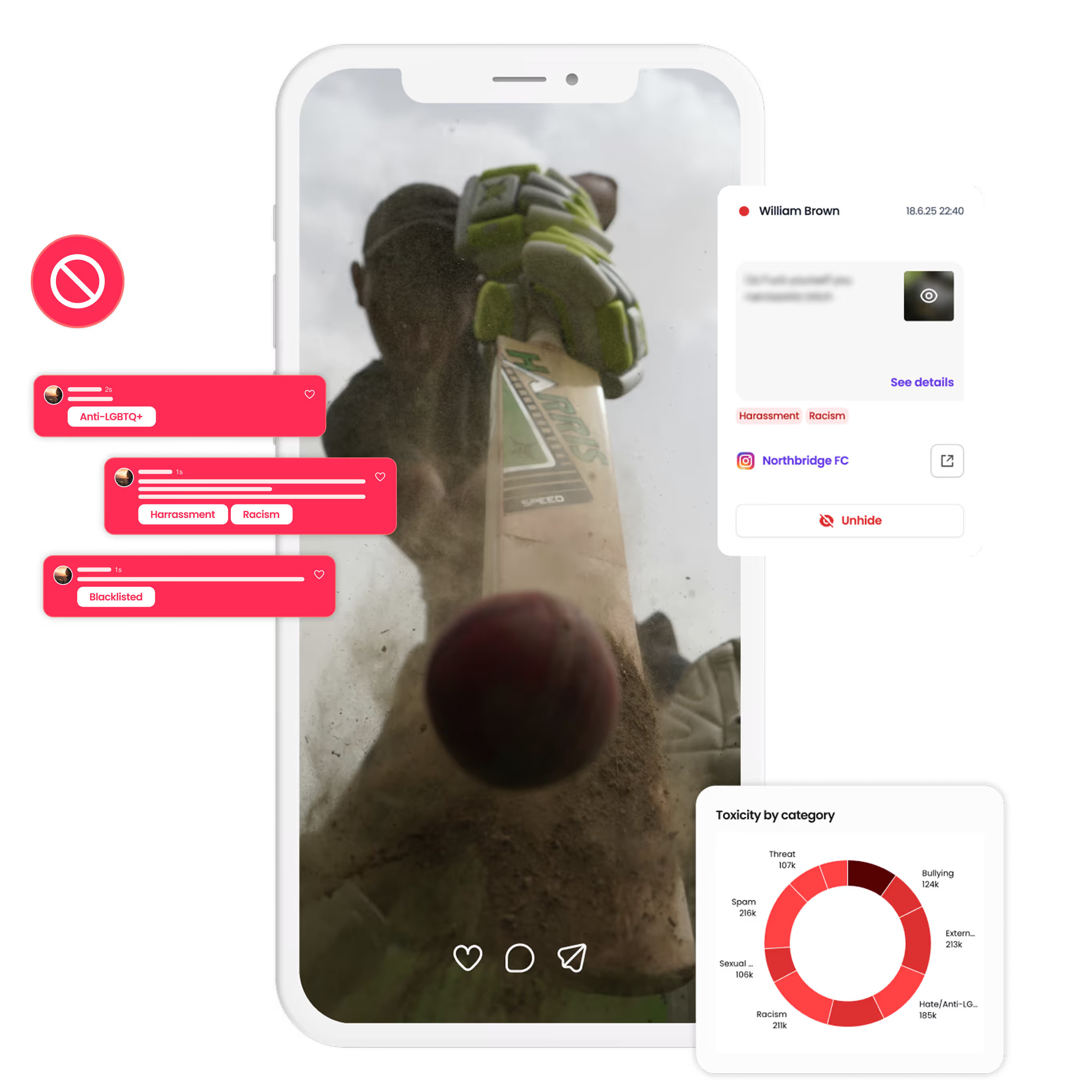

Manage flagged content seamlessly through our intuitive Moderation Hub. Review flagged posts, and take action in real time to protect your community from harmful content.

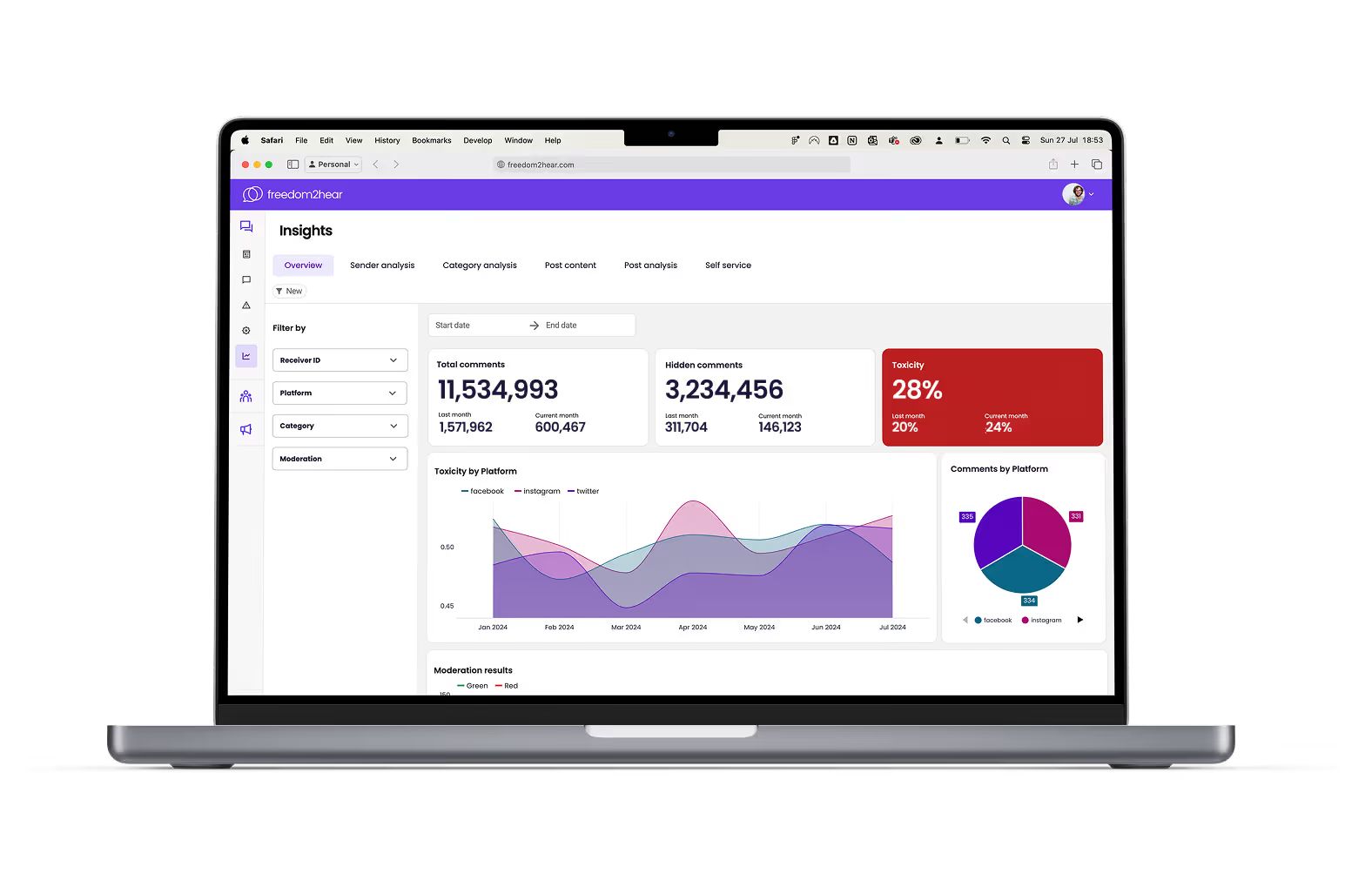

Measure the toxicity levels across your channels with advanced analytics tools. Gain insights into patterns of harmful behaviour and make data-driven decisions to improve safety.

Tailor moderation and customise your strategy to meet the needs of your community by targeting specific types of toxicity, and allowing or banning unique keywords.

Get started with a personalised walkthrough of our solutions.

Team up with our experts to create your bespoke policy that reflects your community's unique cultural context.

Onboard with ease and ensure adoption within your organisation thanks to our experts' support.

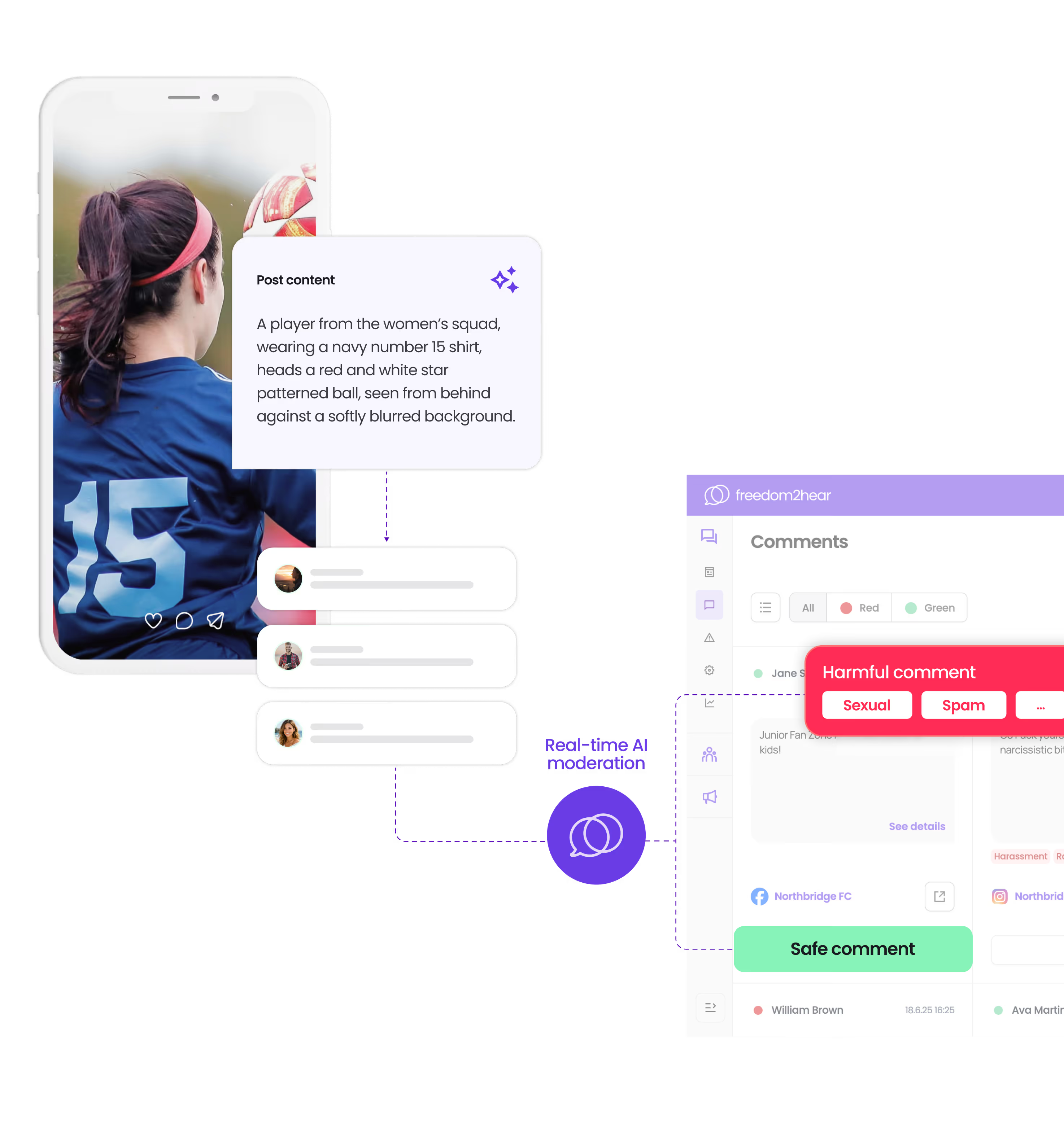

Our emotion-based AI content moderation service utilises advanced artificial intelligence algorithms to analyse the emotional content of text, images, and videos posted online. Understanding context and sentiment helps us to identify and manage content that may not match the values of your online social or internal communities, e.g. hate, threats, spam, profanity, racism, abuse, etc.

Our AI system analyses various cues such as language, tone, context, and visual elements to understand the emotional impact of content. It then applies predefined rules and criteria to classify and moderate content accordingly.

Our service can moderate a wide range of content formats including text-based posts, comments, images, and videos across various social media channels and internal platforms.

Our AI is trained to detect a broad spectrum of emotions including joy, sadness, anger, fear, disgust, and more nuanced emotions such as sarcasm or irony. Freedom2hear is able to operate across 96 languages, achieving 99%+ levels of accuracy.

While our AI plays a significant role in content moderation, human oversight is also essential. Our system flags potentially sensitive content, which can then be reviewed by human moderators to make final decisions based on context and guidelines (should this be preferred over full automation).

Our AI model has been trained on vast datasets to achieve high accuracy in emotion detection. However, like any AI system, it's not perfect. We continuously refine and improve our algorithms to enhance accuracy and effectiveness, with current accuracy levels of 99%+ that are climbing ever higher.

We are staunch supporters of everyone’s freedom of speech. Our solution does not impair any person’s right to comment or post. It does however offer protection for communities who may not wish to be unwanted recipients. This is why we have named our solution ‘Freedom2hear’.

Absolutely not. We only monitor publicly posted social media posts.

We offer customisable moderation settings to suit the specific needs and preferences of our clients. This includes adjusting sensitivity levels, defining custom rules and integrating with existing moderation workflows.

Ready to see how Freedom2hear can transform your content moderation strategy? Book a free demo with one of our experts and discover how our solutions can help you create safer and more engaging online spaces.